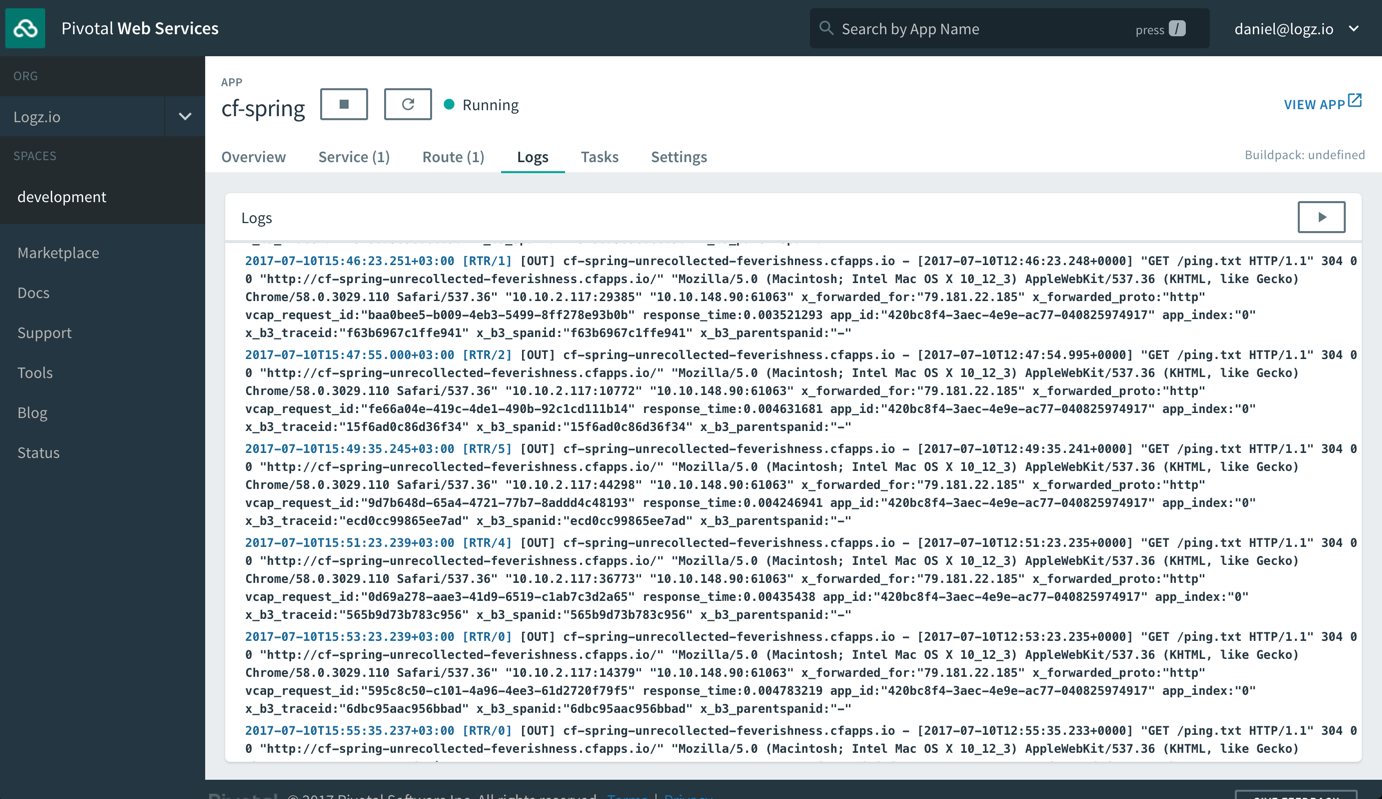

Once the image is built, you can push it in to your docker repository. To get to know more about Filebeat Docker configuration parameters, look here.Īfter that you can create your own Filebeat Docker image by using the following Dockerfile. By default Logstash is listening to Filebeat on port 5044. I have configured it to the IP address of the server I’m running my ELK stack, but you can modify it if you are running Logstash on a separate server. Note the line 21, the output.logstash field and the hosts field. Given below is a sample filebeat.yml file you can use. You can access the repo here.Īs the initial step, you need to update your filebeat.yml file which contains the Filebeat configurations. But since I have done several changes to filebeat.yml according to requirements of this article, I have hosted those with rvice (systemd file) separately on my own repo. This is often known as single responsibility principle.ĪDVERTISEMENT Configuring Filebeat For this section the filebeat.yml and Dockerfile were obtained from Bruno COSTE’s sample-filebeat-docker-logging github repo. High Level Architecture - Instance 1 | Instance 2 īy doing this kind of implementation the running containers don’t need to worry about the logging driver, how logs are collected and pushed. These data can also be sent easily by Filebeat along with the application log entries. Filebeat will then extract logs from that location and push them towards Logstash.Īnother important thing to note is that other than application generated logs, we also need metadata associated with the containers, such as container name, image, tags, host etc… This will allow us to specifically identify the exact host and container the logs are generating. Our tomcat webapp will write logs to the above location by using the default docker logging driver. Filebeat will be installed on each docker host machine (we will be using a custom Filebeat docker file and systemd unit for this which will be explained in the Configuring Filebeat section.) var/lib/docker/containers//-json.logĪll docker logs will be collected via Filebeat running inside the host machine as a container.In Linux by default docker logs can be found in this location: Instance 1 is running a tomcat webapp and the instance 2 is running ELK stack (Elasticsearch, Logstash, Kibana). In this post, we will look into how to use the above mentioned components and implement a centralized log analyzer to collect and extract logs from Docker containers.įor the purposes of this article, I have used two t2.small AWS EC2 instances, running Ubuntu 18.04 installed with Docker and Docker compose. Kibana is an enriched UI to analyze and easily access data in Elasticsearch.Elasticsearch is a distributed, JSON-based search and analytics engine that stores and indexes data (log entries in this case) in a scalable and manageable way.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed